GPT Image 2 Launches Tomorrow on fal.ai

April 21, 2026. The fal-ai/gpt-image-2 and fal-ai/gpt-image-2/edit endpoints flip on tomorrow. Here is exactly what changes for your pipeline, what to flip first, and the code you ship tonight so you wake up ready.

The waiting is over. Tomorrow, April 21, 2026, fal.ai turns on GPT Image 2 as a first-class model. Two endpoints go live at the same moment:

fal-ai/gpt-image-2for text-to-imagefal-ai/gpt-image-2/editfor reference-image editing

No partner preview, no waitlist gate, no progressive rollout. If you have a FAL_KEY tomorrow, you can call it.

The headline change, in one sentence

This is the first OpenAI image model where you can ship typography-critical output without a human review in the loop. Near-perfect glyph accuracy, neutral color, native 4K, and single-pass inference that lands under 3 seconds at medium quality. The rest of this post is about what to do with that.

What flips on tomorrow

Text rendering. Over 99 percent glyph accuracy on English. CJK scripts finally reliable. Multi-word signs, UI labels, comic captions, and long paragraphs come out spelled and kerned on the first pass. The old pattern of rendering, reviewing, re-rendering, hoping, is done.

Photorealism. Over 70 percent of blind test participants misclassify GPT Image 2 portraits as real photographs. Skin micro detail is cleaner, catchlights are consistent, hands and fingers land without the anomalies that used to ship 1.5 back to revision.

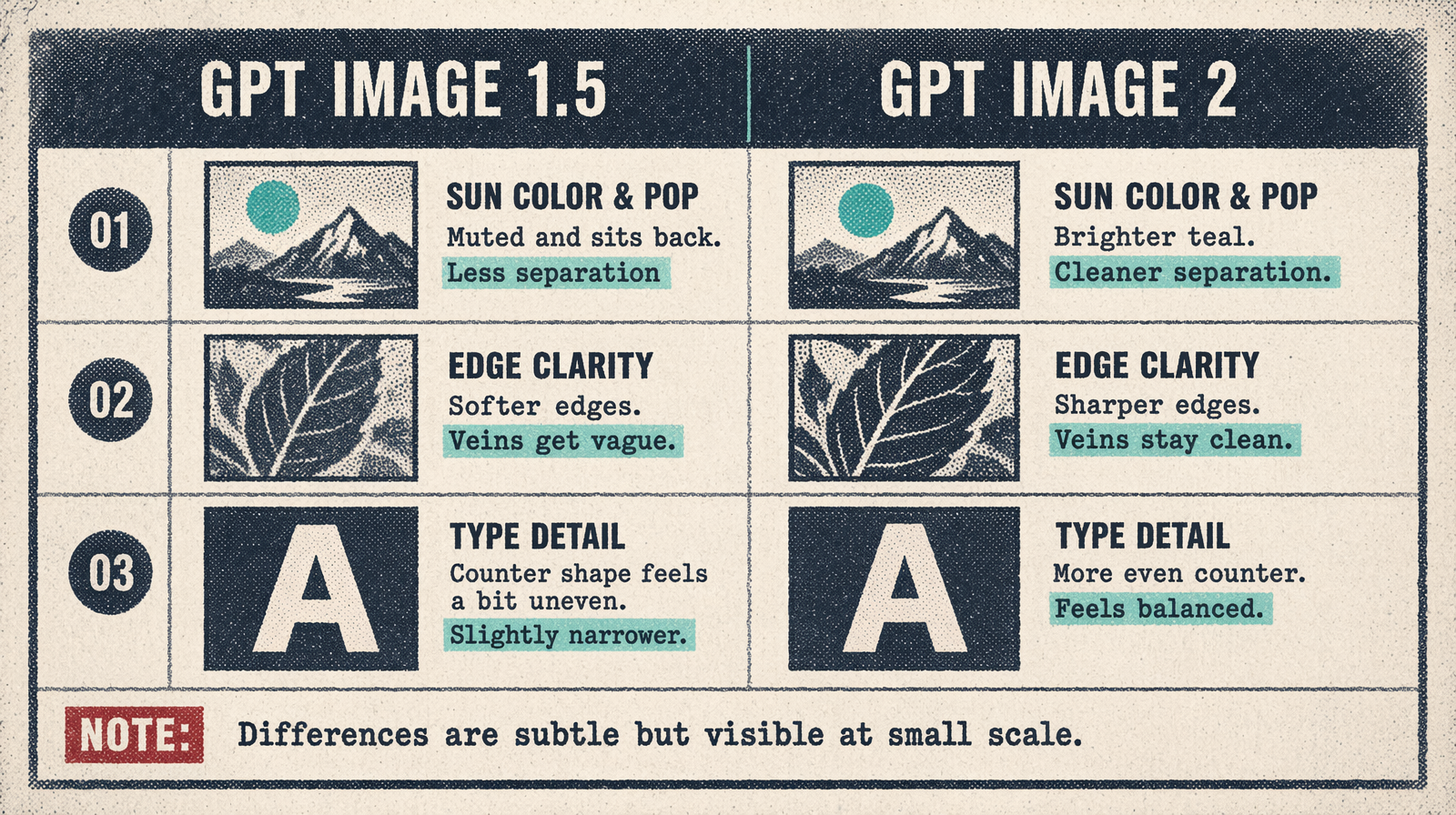

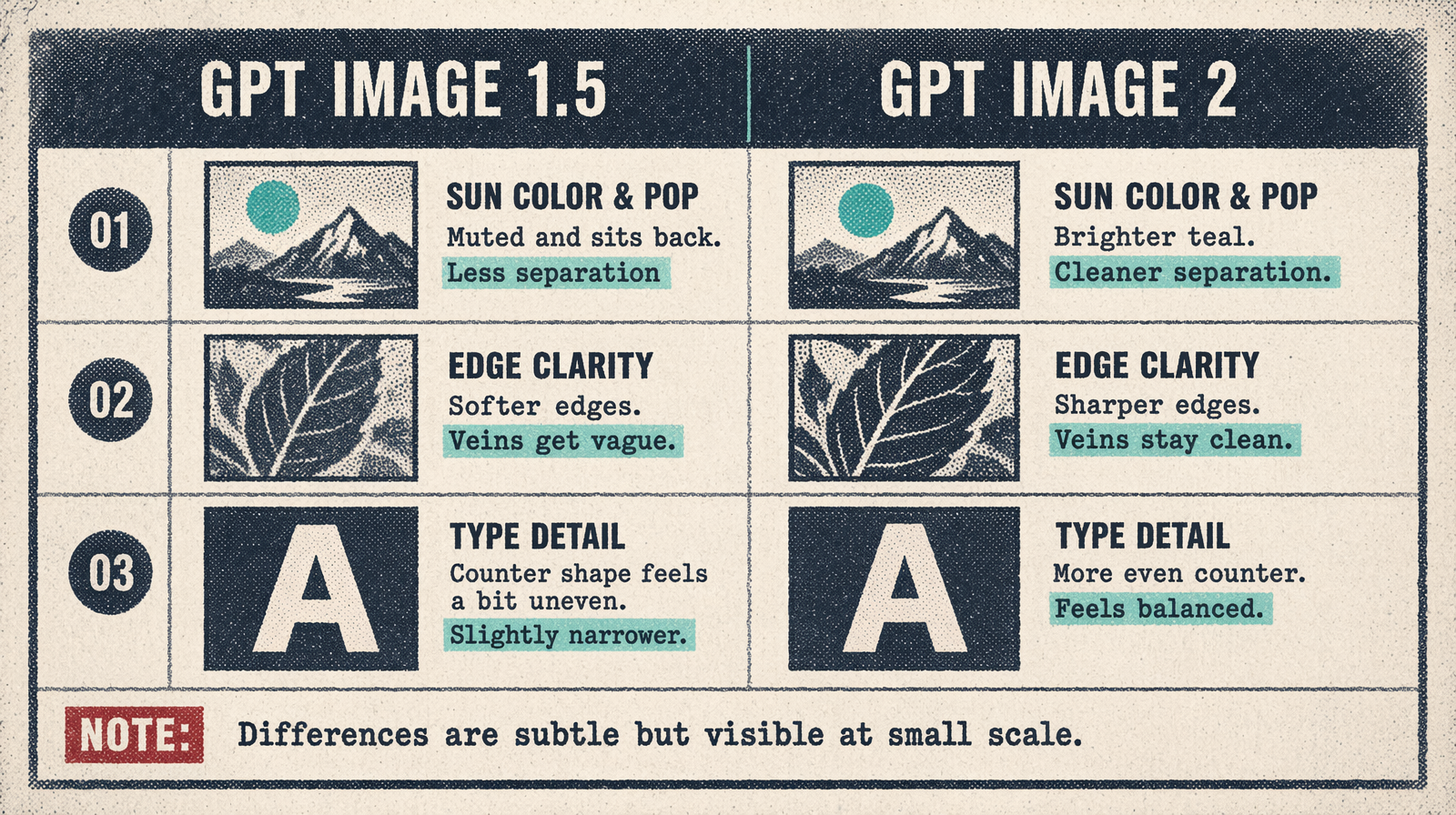

Neutral color. The faint yellow cast that everyone shipping GPT Image 1.5 in production learned to post-correct is gone. Daylight renders as daylight. Gray is gray.

Native 4K. Three new sizes join the tier: 2048x2048, 1920x1080, 2560x1440, and a native 3840x2160 hero mode. Previously you upscaled a 1792x1024 in post. Now you do not.

Single-pass latency. The old architecture ran a planner turn before the image turn. GPT Image 2 hands you pixels directly. Expect p50 near 3 seconds at medium quality, 8 to 12 seconds at high quality 4K.

Edit fidelity. The /edit endpoint now takes an input_fidelity parameter that locks the subject while you restage the background, swap typography, or change the weather. Corporate headshots with wardrobe and backdrop swaps become a real workflow.

What to ship tonight

Your code should not wait until morning. Here is the shape that flips on schedule.

1import { fal } from "@fal-ai/client";23fal.config({ credentials: process.env.FAL_KEY });45const IMAGE_MODEL = process.env.FAL_IMAGE_MODEL ?? "fal-ai/gpt-image-1.5";67export async function render(prompt: string) {8 return fal.subscribe(IMAGE_MODEL, {9 input: {10 prompt,11 image_size: "1024x1024",12 quality: "high",13 num_images: 1,14 output_format: "png",15 },16 });17}

At 00:01 UTC on April 21, set FAL_IMAGE_MODEL=fal-ai/gpt-image-2 in your environment. Redeploy. Done. No code change, one env var, your entire production pipeline migrates in one roll.

Pricing expectations

fal.ai publishes the real numbers at launch tomorrow. Based on partner-access billing, the tier shape will look like this:

- 1024x1024 low: ~$0.01

- 1024x1024 medium: ~$0.04

- 1024x1024 high: ~$0.13

- 1920x1080 high: ~$0.37

- 2560x1440 high: ~$0.66

- 3840x2160 high: ~$1.48

Expect the final sheet at fal.ai/pricing to land within 25 percent of these numbers. Budget your April traffic at these rates and you will be close.

Who should switch on day one

If your product renders text on images (UI mockups, packaging, infographics, posters, YouTube thumbnails, book covers), flip the flag tomorrow morning. The typography gain removes the human review cost that dominated your editorial pipeline.

If your product is photorealistic without typography (product shots, real estate hero stills, food editorial), flip tomorrow afternoon after a quick bench. The 1.5 output was already good; 2.0 is better but the delta is smaller.

If your product is stylized artistic work (illustration, moodboard, painterly marketing), run both in parallel for a week and pick per brief. GPT Image 2 wins on most prompts; Flux 2 Pro and stylized model siblings still win on a few.

Who should hold for a week

If you are under an enterprise SLA that requires a bench before endpoint swaps, run your golden test suite tomorrow evening and flip mid-week. fal.ai keeps fal-ai/gpt-image-1.5 live through May 12 while DALL-E 2 and 3 retire, so you have runway to migrate deliberately.

Checklist for tonight

- [ ] Pin the model string behind an env var if you have not already.

- [ ] Add a feature flag that can flip

fal-ai/gpt-image-2on per cohort if you want a graduated rollout. - [ ] Prepare your golden test suite with the 10 to 30 prompts that define your product's output.

- [ ] Subscribe to the fal.ai changelog RSS so you get the exact minute the endpoint lights up.

- [ ] Set a Slack reminder for 09:00 your local time tomorrow to run the flip.

The bigger picture

This is the first release where the typography wall that has held back image models since DALL-E 2 finally comes down. Everything your design team has produced manually (menu boards, app mockups, book covers, infographics) is now renderable at pace. That does not replace design craft. It replaces the 80 percent of creative work that was mechanical, repeatable, and never deserved senior attention in the first place.

fal.ai puts GPT Image 2 next to 600 other models under the same FAL_KEY and the same queue. No second invoice, no second auth, no second SDK. Tomorrow you run it in production.

See you at the endpoint.